With the rapid development of Internet, the internet users, application types and network bandwidth, are all showing an explosive growth. In particular, P2P technology and online video have a widespread impact to the Internet. For network operators and enterprise customers, most of the internet content is outside of the network, users need to access inter-network to get the target content, this results in bandwidth congestion, high cost of inter-network settlement charge, and deterioration of the user experience.

Overview

Designed for network operators and enterprise customers, NAG Cache is a leading internet traffic caching and network acceleration solution. By analyzing the traffic information, it can cache the hot internet content such as website, online video, P2P applications and HTTP files into the carrier’s and enterprise’s network. By this means, it significantly reduces the inter-network settlement charge, and at the same time improve the user’s experience.

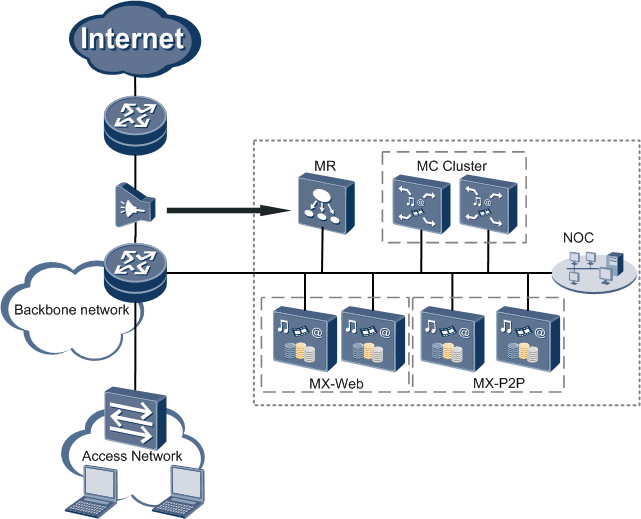

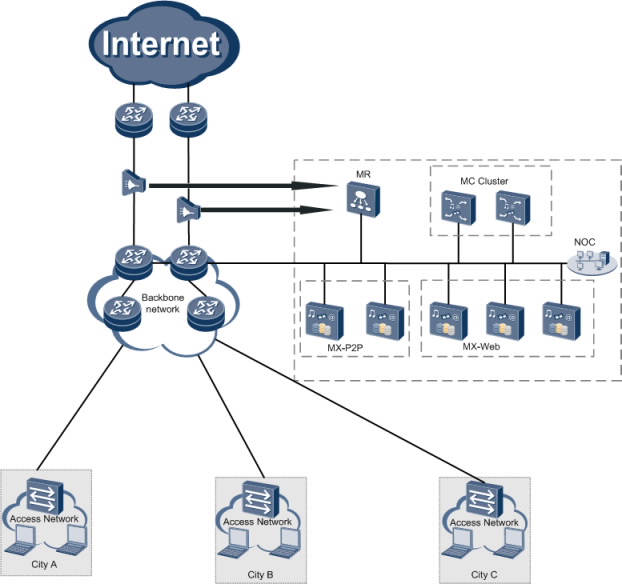

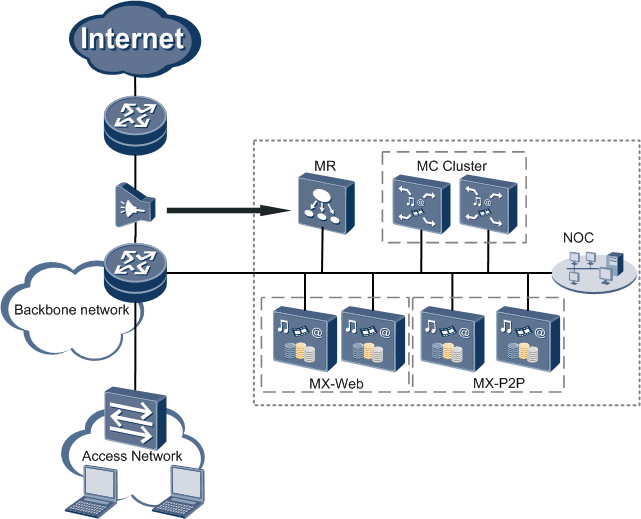

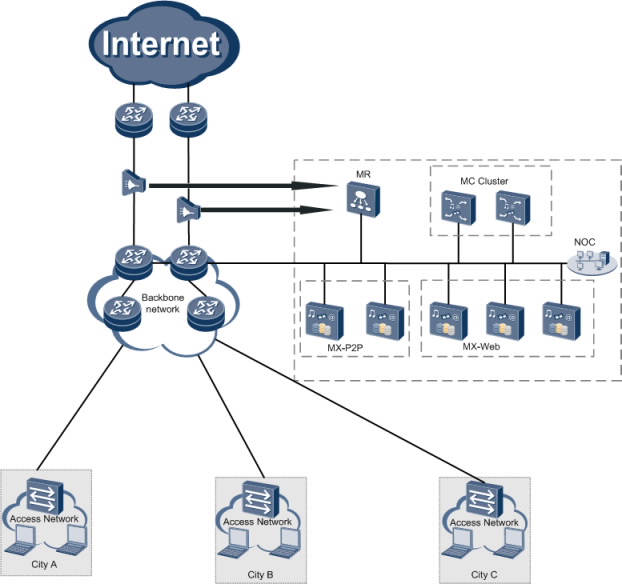

NAG Cache solution consists of the MR (media redirection), MC (media control), MX (media switch – Web cache/P2P cache), and NOC (network operation center), all using high performance servers.

Media Redirection (MR): The MR checks and identifies Internet data, and forwards network traffic to appropriate cache servers for processing.

Media Control (MC): The MC is the control center of the cache system and schedules download requests to multiple cache servers based on the load balancing management mode.

Media Switch (MX): The MX is used for caching and accelerating data traffic during web surfing, HTTP large file download, video file download, and P2P applications. The MX includes MX-Web and MX-P2P.

Network Operation Center (NOC): The NOC is used for maintaining and managing devices of the cache system.

Deployment

Huawei NAG CACHE solution supports two deployment modes: centralized deployment and distributed deployment.

Centralized deployment

In this mode, cache servers are deployed at gateway exchanges. When a user request arrives at a gateway exchange, a cache server analyzes the request. If the cache server has the requested content, it returns a response carrying the requested content. This mode reduces inter-network settlement fees.

Distributed deployment

In this mode, scheduling subsystems on cache servers are deployed at the network gateway, and caching subsystems are deployed at network nodes in a layered and distributed manner. This mode supports unified scheduling over services on the entire network, implementing content scheduling and sharing among caching subsystems in different regions, and preventing redundant caching of the same content on an external network. Compared with the centralized deployment mode, the distributed deployment mode further reduces interconnection settlement charges and provides better user experience. Furthermore, the distributed deployment mode can reduce the usage of bandwidth between the network gateway and distributed caching subsystems, and thus the construction cost of the intranet bandwidth expanding is saved.